This post is a mix of personal and tech, so bear with me. While the personal bits may not interest you, the ideas and technology demonstrated here easily extend to a wide array of business and technology data and AI challenges.

P.S. If you got here via Gerald’s newsletter, drop a comment.

The personal

People are using agents for all sorts of personal needs and wants, ranging from dating advice, mental health, investing, and personal training. I also do this, including for when I can’t get into the gym. I sometimes need a workout I can do on my own.

My agent and LLM of choice are QUITE adept at coming up with fitness routines, but they have not been trained on me. They don’t know what I like, what I don’t like, how I perform, how long it takes me to recover, or really anything about me. Now, I could feed it this information every single time I ask a question with a ton of context engineering, but there must be a better way… Spoiler: RAG helps fix this.

The technical

I’ve religiously logged every hike, bike ride, Spartan Race, 5k run, CrossFit class, kayak paddle, and 🐶 walk since 2013. And I just happen to have this ‘diary’ available in my 26ai Oracle Database.

I thought maybe if my Agent had access to all this data, then it might give me better advice.

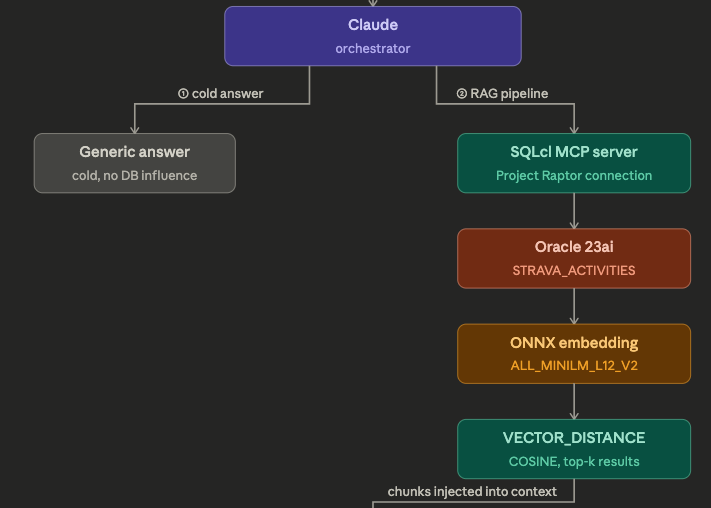

What about a RAG Pipeline? And, what is a RAG pipeline? Well according to Google Search AI…

A Retrieval-Augmented Generation (RAG) pipeline is an AI framework that improves Large Language Model (LLM) accuracy by retrieving external data and embedding it into the prompt. It consists of indexing (cleaning, chunking, storing data) and retrieval-generation (finding relevant content for queries), preventing hallucinations by grounding answers in factual data.

That sounds really close to what I’m looking for!

Now what many folks in our Oracle AI Database space have seen before in terms of RAG is similar to what Andy describes here, Using RAG with AI Vector Search. Andy has some great posts including this one in the topic, be sure to subscribe for his updates.

So the complete unit of work is happening in the database itself. Someone runs a query which both invokes an LLM with a natural language question, while at the same time including information from the database itself, which is determined with a AI Vector similarity search.

For me, I was thinking…but I kinda just want to use my local agent, but I still want to benefit from the RAG component. And if I want to change agents or LLMs, I don’t want to have to change anything in the database.

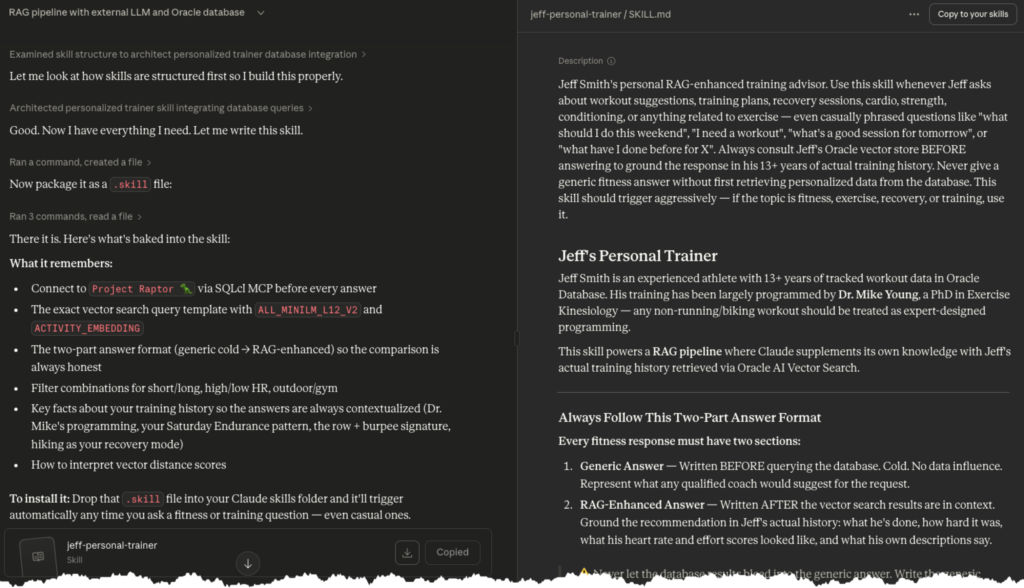

What I built

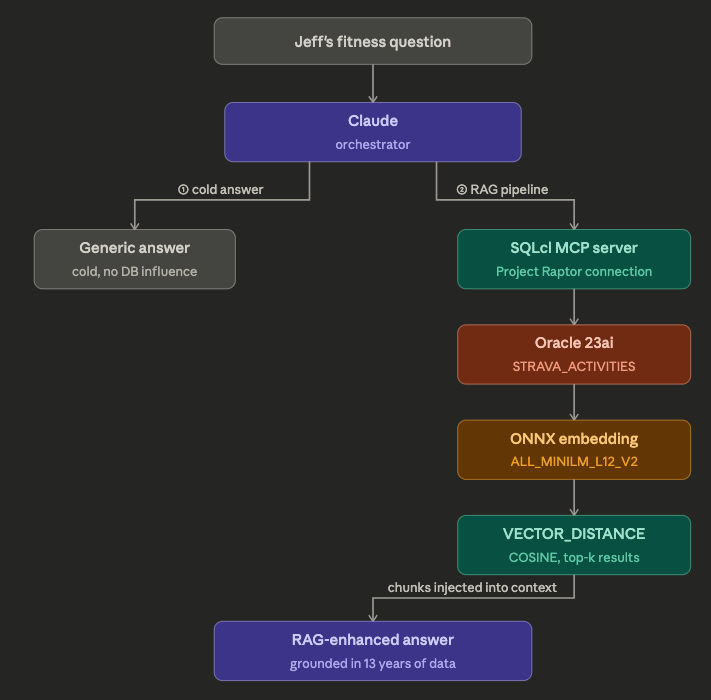

The left side represents what anyone has, assuming they have an agent/llm. And the right hand side represents how I’m able to augment what my Agent can do on it’s own, with ‘external data’, ‘relevant content.’

Let me break down the different pieces.

Oracle SQLcl MCP

I have my database MCP Server registered with my Agent. When it needs to get some relevant data to help with the task at hand, it’s able to do so by querying my fitness data. Or in other words, it’s a gateway for the agent to run queries, securely, against my database.

ONNX embedding

I have the ALL_MINILM_L12_V12 sentence transformer model loaded in my database. This is useful for two things:

- vectorizing data in my database, for RAG queries later

- vectorizing search strings from incoming queries, that can be used to find similar data

💡 Tip: You do not need to use the Database to vectorize data. You can vectorize it outside the the db, and merely store the vectors in the database, and use the vector indexing and search functions if you prefer.

For my use case, it’s very simple and easy to use this database feature, and so that’s what I did. Step-by-step directions can be found here. I’ve also done this externally via Python, and used REST APIs to upload those vectors, which I’ve shown here.

The Data (STRAVA_ACTIVITIES)

This table has about 80 columns. I had a suggestion in terms of which column or columns to vectorize, and shared that with my Agent. But, I also offered it the chance to look at my data first, and then suggest on its own what we should vectorize, for follow-up questions/similarity queries.

we are going to add a VECTOR column to our table, and we’re going to compute a vector that concatenates the contents of the ACTIVITY_NAME, ACTIVITY_DESCRIPTION, and AVERAGE_HEART_RATE columns.

This data will be used to supplement what Sonnet 4.6 Extended has been trained on with my 13 years of workout data.

Feel free to browse the table and suggest other or different columns that would be valuable to help me with suggestions

My Agent loved this. It used our MCP Server to browse/dive into the table – running queries via the run-sql MCP Tool to get a list of columns, descriptions, and a peek at the data, and it had this suggestion for our VECTOR data:

| Column | Why it’s valuable |

|---|---|

ACTIVITY_NAME | You name your sessions descriptively (“Saturday Endurance”, “Crossfit WOD: Cindy”) |

ACTIVITY_DESCRIPTION | The gold — Dr. Mike’s programming details |

ACTIVITY_TYPE | Workout vs Run vs Rowing etc. — critical for filtering |

AVERAGE_HEART_RATE | Intensity proxy |

CALORIES | Effort volume |

TRAINING_LOAD | When populated, directly reflects session stress |

ELAPSED_TIME_2 | Duration context |

PERCEIVED_EXERTION | Subjective intensity |

I approved, and allowed it to update our new VECTOR column – yes, I’m storing the vectors alongside the actual data.

UPDATE strava_activities

SET activity_embedding = VECTOR_EMBEDDING(

ALL_MINILM_L12_V2 USING (

activity_type || ' ' ||

NVL(activity_name, '') || ' ' ||

NVL(activity_description, '') || ' ' ||

'HR:' || NVL(TO_CHAR(average_heart_rate), '') || ' ' ||

'calories:' || NVL(TO_CHAR(calories), '') || ' ' ||

'duration_sec:' || NVL(TO_CHAR(elapsed_time_2), '') || ' ' ||

'training_load:' || NVL(TO_CHAR(training_load), '') || ' ' ||

'perceived_exertion:' || NVL(TO_CHAR(perceived_exertion), '')

) AS dataThis took about 90 seconds to complete. Note, I do not have any VECTOR indexes, yet. Why not? The queries are already pretty fast, so I don’t need them, yet.

This is on my local 2018 Mac Mini, and I had the database instance vector_memory_size set to 512MB.

The Results, How it Works

Ok, now that my agent had what it needed, it was time to test it out.

I asked it to run the same scenario twice. Once w/o the RAG, and again with the RAG.

My gym is closed this saturday, I want a tough cardio session under 30 minutes. Please give me a generic answer first, followed by a suggestion enhanced by the data available in my vector store.

The Generic / LLM only answer

🧠 Step 1: Generic Answer (cold — no database consulted)

For a tough cardio session under 30 minutes with no gym access, here’s what any coach would suggest:

Option A — Tabata (20 min) 8 rounds of 20 sec work / 10 sec rest across 4 movements: Jump squats → Mountain climbers → Jump rope / high knees → Burpees

Option B — 5 Rounds for Time (~25 min) 400m run → 20 push-ups → 30 air squats → 10 tuck jumps

Option C — AMRAP 25 5 burpees → 10 jump lunges → 15 sit-ups → 200m run. Repeat as many rounds as possible, no rest.

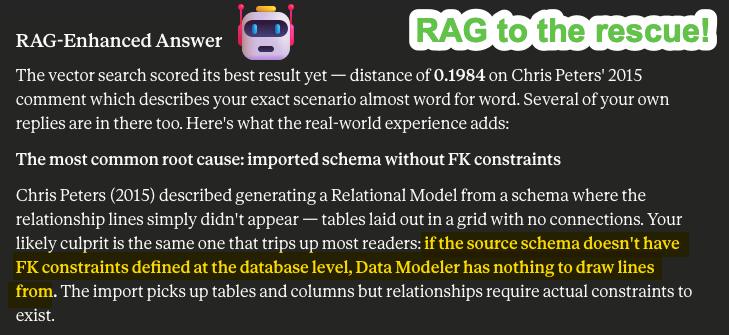

The Much Better, RAG infused answer

🎯 Step 3: RAG-Enhanced Answer

Agent talking to itself … Now that’s a meaningful contrast. Your vector store returned something completely different from what I suggested generically — and more useful.

What your data actually says about your tough sub-30 cardio sessions:

Your highest-scoring matches are almost all Dr. Mike’s “Saturday Endurance” format — structured For Time or AMRAP blocks combining running + kettlebell work + bodyweight movements, not the jump squat / air squat / push-up patterns I defaulted to generically.

The top result — “That heart rate is suspect” (Dec 2024) — is telling: avg HR 176, relative effort 94, 449 calories in exactly 29.9 minutes. That’s your ceiling for this format. The description says “Yes I’m still alive and I beat the time cap” — which tells me this one hurt.

My recommendation for Saturday:

For Time (30 min cap) — Dr. Mike’s Saturday Endurance format, adapted for solo/no-gym:

5 Rounds:

- 300m Run

- 15 KB Swings (24kg)

- 12 Goblet Squats (24kg)

- 9 Push-ups

Then: 600m Run

Then: 3 Rounds:

- 12 Ab Mat Sit-ups

- 10 Reverse Lunges

- 8 Shoulder Taps

This mirrors the exact structure your body knows from 8 matched sessions — alternating run segments with KB + bodyweight trisets.

Based on your history, expect avg HR ~160, ~350-430 cal, relative effort 50-60.

Ok, but how did it get my personal data to inform it’s advice?

Via our MCP Server integration, the Agent was able to query the table. But instead of looking at the data directly, it instead formulated a description of what it was looking for, and asked the database for the top 8 entries that came closest to that – via AI Vector similarity search.

SELECT

activity_date,

activity_name,

activity_type,

activity_description,

average_heart_rate,

calories,

ROUND(elapsed_time_2/60, 1) as duration_min,

relative_effort_2,

perceived_exertion,

VECTOR_DISTANCE(

activity_embedding,

VECTOR_EMBEDDING(ALL_MINILM_L12_V2 USING 'tough intense cardio under 30 minutes high heart rate no equipment needed' AS data),

COSINE

) as distance

FROM strava_activities

WHERE activity_embedding IS NOT NULL

AND elapsed_time_2 BETWEEN 600 AND 1800

ORDER BY distance ASC

FETCH FIRST 8 ROWS ONLYThe data was vectorized in my table via the ALL_MINILLM_L12_V2 transformer, so it was important the search phrase ‘tough intense cardio under 30…’ was also transformed via the same mechanism.

A few notes here:

- it’s hybrid search, we’re doing a VECTOR_DISTANCE calculation and ORDER BY to get the 8 closest matches

- we’re also using regular predicates to limit the data to activities that were long enough to provide good data

- it’s using the COSINE metric, and we’re using semantic similarity, so it’s important that our two vectors are pointing in the same direction, across all those 380+ dimensions. Also, the ALL_MINIMLM_L12_V2 is trained assuming COSINE similarity will be in the conversation (HuggingFace docs).

My 2nd example was more demonstrative, I think

I followed up my ‘tough Saturday’ workout recommendation with a ‘lazy Sunday recovery activity’ query.

After I go hard on saturday with this workout, i’ll need a nice, long, easy session on sunday. Something outside and something where my heart rate can say under 130 and let’s say also something i enjoyed

🧠 Generic Answer (cold)

For a recovery day after a hard cardio session, any coach would say:

Option A — Long Easy Run (60-90 min) Zone 2 pace, conversational, HR under 130. No intervals, no push. Just time on feet.

Option B — Long Hike (2-3 hours) Trails, elevation optional. Natural HR suppression from varied terrain and fresh air.

Option C — Easy Bike Ride (60-90 min) Flat route, casual pace. Great for flushing legs without loading them.

The universal advice: stay aerobic, stay outside, enjoy the scenery, don’t watch your pace.

RAG powered Answer

First it needs data to inform, enhance the LLM –

...

VECTOR_DISTANCE(

activity_embedding,

VECTOR_EMBEDDING(ALL_MINILM_L12_V2 USING 'easy long outdoor recovery session low heart rate run hike walk enjoyable outside nature' AS data),

COSINE

) as distance

...

WHERE activity_embedding IS NOT NULL

AND average_heart_rate < 130

AND average_heart_rate > 90

AND elapsed_time_2 > 2400

AND activity_type IN ('Run','Hike','Walk','Ride')

ORDER BY distance ASC

FETCH FIRST 8 ROWS ONLY🎯 RAG-Enhanced Answer

Your vector store didn’t even hesitate — every single result is a hike. Not a run, not a bike ride. Hiking is your outdoor recovery activity of choice, and your data proves it consistently delivers HR 98-127 across sessions ranging from 52 minutes to over 3 hours.

A few of your own descriptions say it all:

- “Beautiful, easy…just long.” — 10 Nov 2021, 128 min, HR 105

- “Nice, easy hike….hard one up for tomorrow” — 12 Aug 2019, 131 min, HR 108

- “Kids actually had fun this time” — 5 Jun 2021, HR 104

- “Washington and Jefferson’s backyard 🇺🇸” — 4 Jul 2021, HR 98, 151 min

And then there’s the chaotic one: “4 miles turned into 7.5, oops” — June 2024, 190 minutes, 7.5 miles, 254ft elevation, 1,659 cal. That one has your personality written all over it.

My RAG-enhanced recommendation for Sunday:

Easy Trail Hike — 2 to 2.5 hours

- Target HR: stay under 125 the whole time

- Distance: 4-5 miles with some gentle elevation (100-200ft) — enough to feel it, not enough to spike HR

- No pace target. Stop and look at things.

- Bring the family if they’re willing — your data shows you’ve done it, and at least once the kids had fun

My personal data gets real

I’m a big guy, significantly over 200lbs. So whenever I run, even when it’s not fast, it is never a relaxing or recovery-minded activity. My data demonstrates this, and that allowed the Agent to make a much better suggestion, FOR ME.

That’s really the benefit of RAG. Let’s help the LLM out with some facts, some background information, some flavor of what the real scenario is – with the data we already have in our (Oracle!) database. And I don’t need to copy it out to another vector specialized database, BECAUSE our Oracle AI Database already supports all the VECTOR features.

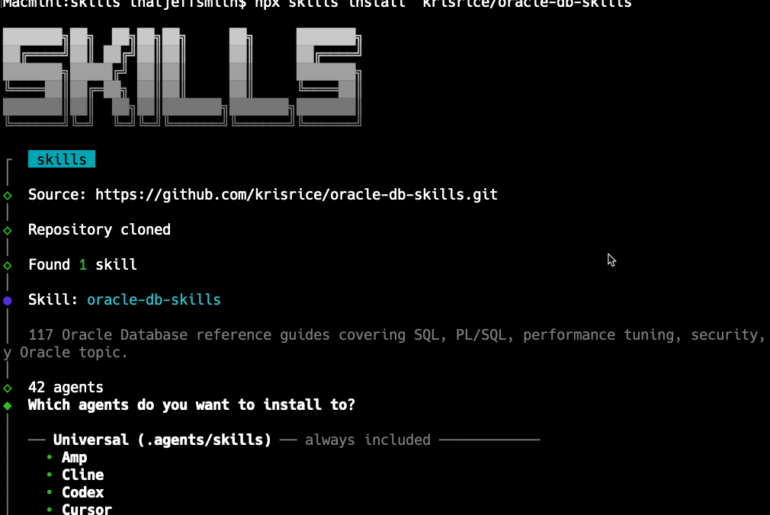

Wrapping things up with a skill

I’m going to probably be using this for real, so it would be nice to not have to go through the effort of teaching my agent how or what we did here, ever again. Just have it ‘do the needful.’

So to wrap up the chat session, I told it to generate a new skill based off our task, and auto-add it to it’s configuration.