I’ve talked about the Cart a few times. It’s interesting to describe what the Cart is for, because it can be used or so many different things. But in general, it allows you do perform a set of operations against a collection of database objects.

In this case I’m going to show you how to export several views and tables to an Excel file.

And the rest of this blog post will be done using just pictures – it’s that easy.

Note: I’m using version 17.4 in this example.

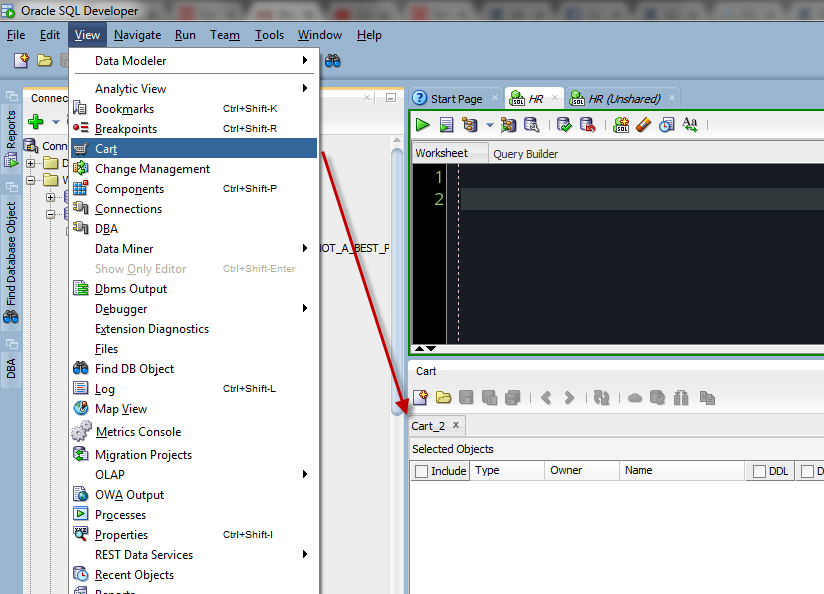

Step 1: Open the Cart

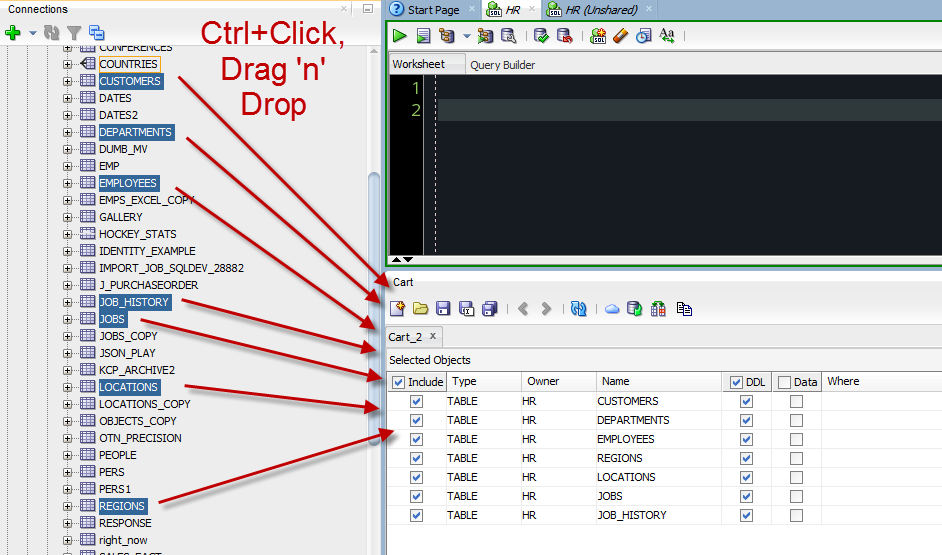

Step 2: Add your objects

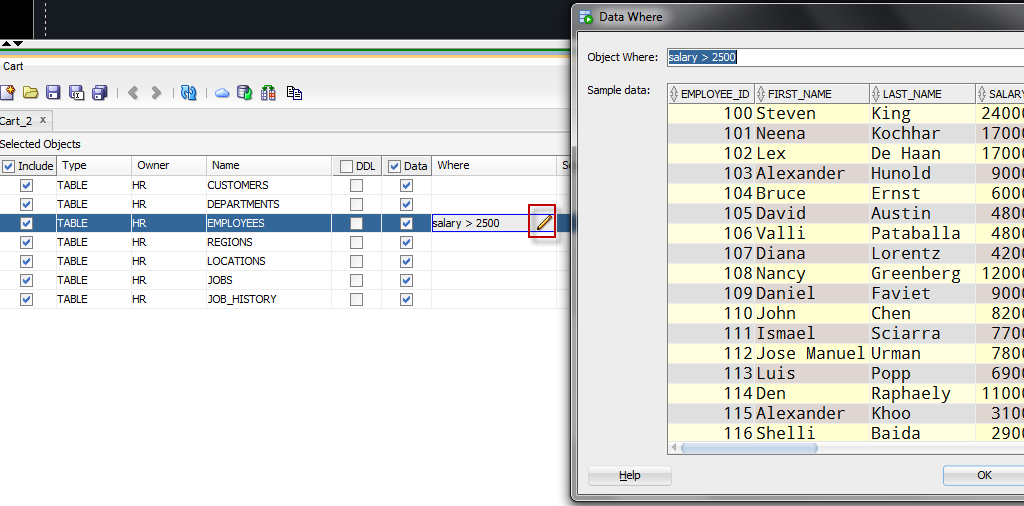

Step 3: Tell us what you want

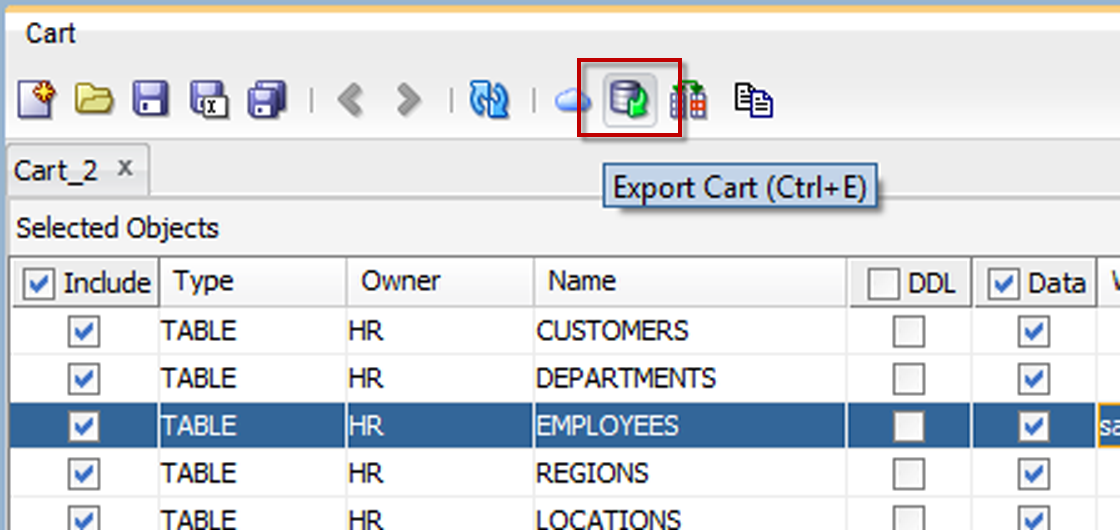

Step 4: Export

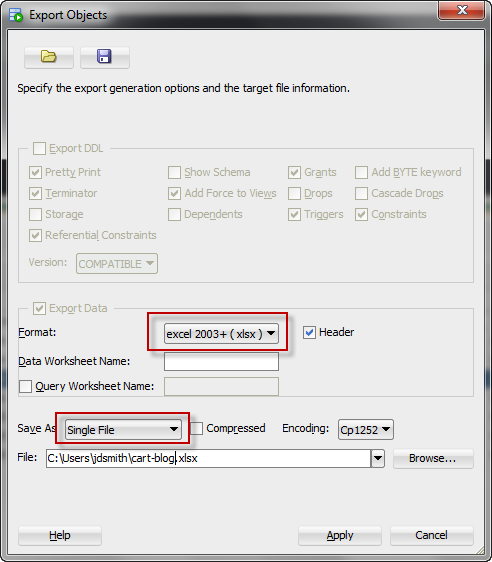

Step 5: Set your export options

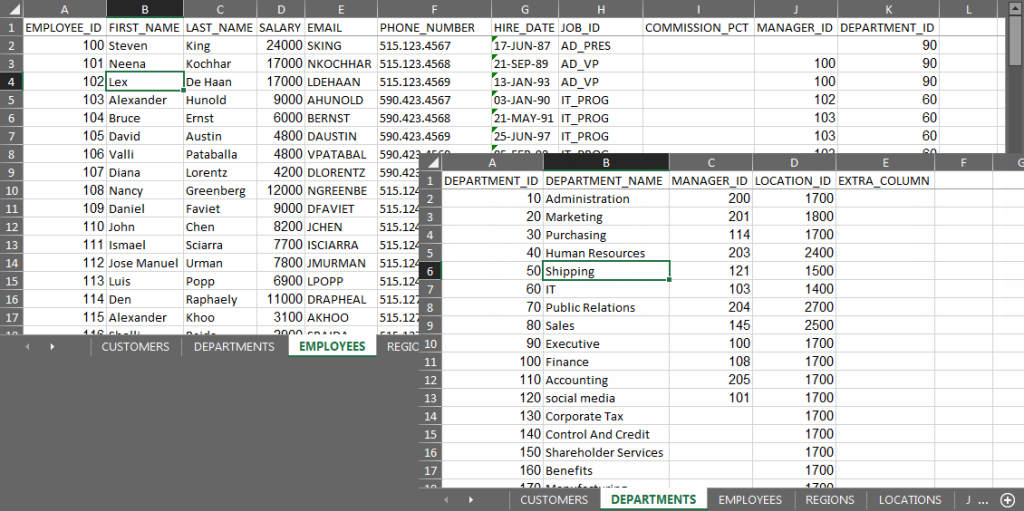

Step 6: Open the Excel file

24 Comments

Hello Jeff,

I have one requirement to export multiple table data which are owned by user “A” into excel.

But I am connecting to oracle database via user “B”, where user “B” has select privilege on all tables of user “A”

And hence I can’t see any table under “tables” option. How can I perform this with the steps you mentioned.

Instead of your tables, go to the Other Users section of your tree, then expand and dive into User A’s tables.

Hello,

You described a method to add multiple tables to the cart which are then exported to separate worksheets within an excel file.

Instead of selecting tables, I would like to have a single query be grouped by a category (for example, by department) and exported to separate worksheets. Do you have any advice?

Thanks,

Rick

Option 1: create a view for each workbook, export via cart as shown

Option 2: copy and paste

Hi Jeff,

Is there a way to use cart to export data to an existing CSV file. Like an append of the data to already existing CSV file, without over writing data in the file.

No but you could use SQLcl to pipe the data and >> append it to your file

Please is there any Artie on using SQLcl to pipe the data and append to CSV file. If not, can you provide guidance on to do it using SQLcl

I’m on vacation, you’re on your own with Google till Monday. But..from prompt run sqlcl with connect string and >> the output, same as you’d do with sqlplus.

I will wait till Monday, then. I also need to schedule the script to run monthly.

Hi Jeff,

Guess you are back. Please can you put me through on using SQLcl to append data to CSV file and also on how to automate it to run once every month

Thanks for this. One thing I noted is that I can’t add the same view more than once to a cart, even when I want to specify a different “Where” clause. Not sure why there’d be this limitation, or perhaps I’m not doing it correctly.

I don’t think we anticipated this requirement…is there a way you could OR your where clauses together in one go?

Unfortunately no as I need separate CSVs per the lookup clause for exporting an old accounting database into a cloud-based system. I’m having to manually export each view’s output 16 times per view. My Oracle DBA days ended about 20 years ago, so I’m unsure if there’s a better way to do this in a larger script using spooling or similar. I suppose I can either make 16 carts, or 16 new views for each view where the clause is included.

Thanks for the unexpected response Jeff. I am unworthy of your time.

Yeah I would export the whole table as-is to a temp table in the cloud db, then move the data over with 16 insert as select goes (or create table as selects)…I’m guessing that might even be faster.

Hi Jeff,

I am using SQL developer 17.4.0.355, build 355.2349

I have some views that i need to export the output into excel from sql developer. but i see no way to get the output of the query in excel.

Please help me find out how to export data query output into excel.

Regards,

Yasir

Export wizard.

Check views.

Check data

Single File, xlsx.

Use the wizard to pick the right views.

Or use the Cart.

Excel How to post

Important step is to select data and not ddl in the window that displays the list of tables.

It would be nice if we can export multiple sql result into single excel.

Understand it is not supported by now.

Look forward to it.

Is it possible to do similar export with multiple SQL’s without creating views.

No.

Is there any equivalent process for importing?

Once a week, we’re supplied with an Excel spreadsheet with six tabs. Each tab needs to be imported to a specific table (which needs clearing down first). At the moment, we truncate all the tables, then use the Import Data option on each of the tables in turn, selecting the same file, but the next tab, which is kind of boring.

Unfortunately, it’s further complicated by the fact that three of the tabs require attention in the Column Definition step of the Import Wizard (to clean up column names, or date values). Which doesn’t make it any more exciting 😉

Change your process.

Instead of doing this manually with Excel and SQLDev – automate it.

Send those CSVs to the server, and create External Tables. Then create a job that weekly purges the source tables and does a INSERT AS SELECT from the externals.

In SQLDev, you can setup the import scenarios, and save them to be re-used later, either in the UI or our CLI.

I’m not sure we get to change the start of our process, so it’ll still mean I get the spreadsheet, and would then need to manually (or macro) split it to different CSV files, and upload them. And then run the job to load the externals. It’s not much of an improvement (I may not need to keep reconfiguring the column definitions). So it sounds like the current method isn’t too sub-optimal, given the conditions.

When/if we can improve the mechanism which gives me the spreadsheet in the first place (such as having the underlying database connection, and raw queries), we can fix the whole thing and do direct database-to-database transfers. That’s the goal anyway 🙂

Right, the entire process/mechanism needs to be fixed – and good luck!