SQL Developer is a pretty mouse-heavy tool. By that I mean that many of the functions are accessed via mouse-clicks. When browsing tables in the tree you may have noticed that you can expand a table node to display the table columns.

There are some pretty cool features available under the context menu when clicking on a table column:

Some of these actions are available under the table right-clicks, but a couple are not, ‘Encrypt’ and ‘Decrypt.’

One that you may find useful is a dynamic table refactoring feature called ‘Normalize.’

Normalize a Table Column

Sometimes you find a table that has all the data in it. Like a column called ‘Country.’ And instead of having a countryID which points to a child country table record via a foreign key constraint, they just have the text value as part of the ‘master’ record. This isn’t ideal for many situations but it can be a pain to fix after-the-fact.

This SQL Developer feature will ask you a few questions then generate a dynamic script to split that data out to a new table, and populate new IDs in the look-up table.

Wait, this won’t work! I’m trying to do this on a partitioned table. Lots of things you can’t do column wise when a table is partitioned. So let’s try again on another table – my iTunes table I created in my earlier ‘Free Beer’ post.

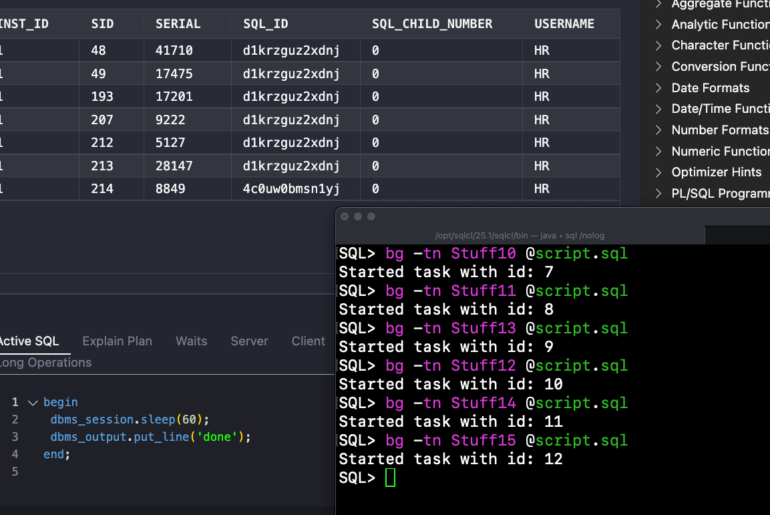

Here’s the script that SQL Developer generates:

DECLARE l_sql VARCHAR2(32767); l_col_size NUMBER; PROCEDURE run(p_sql VARCHAR2) AS BEGIN EXECUTE IMMEDIATE p_sql; END; BEGIN FOR r IN ( SELECT data_type FROM sys.all_tab_cols WHERE owner = 'HR' AND table_name = 'ITUNES_MUSIC' AND column_name = 'ARTIST' ) LOOP IF ( r.data_type NOT IN ('VARCHAR','VARCHAR2','CHAR','NCHAR','NVARCHAR','NVARCHAR2') ) THEN raise_application_error (-20001,'Normalize only supported on VARCHAR,VARCHAR2,CHAR,NCHAR,NVARCHAR,NVARCHAR2'); END IF; END LOOP; SELECT data_length INTO l_col_size FROM sys.all_tab_cols WHERE owner = 'HR' AND table_name = 'ITUNES_MUSIC' AND column_name = 'ARTIST'; run('create table MUSIC_ARTISTS (ARTIST_ID number not null primary key, ARTIST varchar2('||l_col_size||') not null)'); run('create sequence ARTIST_SEQ'); run('create or replace trigger "T_ARTIST_ID_SEQ"'|| ' before insert or update on '|| ' MUSIC_ARTISTS for each row '|| ' begin '|| ' if inserting and :new.ARTIST_ID is null then '|| ' for c1 in (select ARTIST_SEQ.nextval nv from dual) loop '|| ' :new.ARTIST_ID := c1.nv; '|| ' end loop; '|| 'end if; '|| 'end;'); run('insert into MUSIC_ARTISTS( ARTIST ) select distinct ARTIST from "HR"."ITUNES_MUSIC" where ARTIST is not null'); run('alter table "ITUNES_MUSIC" add ARTIST_ID number'); run('update "ITUNES_MUSIC" x set ARTIST_ID = (select ARTIST_ID from MUSIC_ARTISTS where ARTIST = x.ARTIST)'); run('alter table "ITUNES_MUSIC" drop column ARTIST'); run('alter table "ITUNES_MUSIC" rename column ARTIST_ID to ARTIST'); run('alter table "ITUNES_MUSIC" add foreign key (ARTIST) references MUSIC_ARTISTS(ARTIST_ID)'); END;

Once I click ‘OK’ on the dialog, the script will run and I’ll have:

- A new table with the Artist column refactored and linked to the original table

- The existing table data has changed with the old column rebuilt and pointing to the new table

- A new sequence and trigger setup to handle new records on an INSERT

Here’s a picture of what happened (you can build your own model using the ctrl-drag-and-drop trick.)

17 Comments

Hi Jeffs … How to download this software? plz Help me …

Hi Mina,

I made a YouTube video, shows how to get up and running in 5 minutes or less: http://www.thatjeffsmith.com/archive/2012/05/getting-started-with-sql-developer-less-than-5-minutes/

Check that out and let me know if you have any questions!

Jeff

Not sure if they’re really “normalisation” actions but I can think of a few table modifications that I’d categorise as “refactoring”:

* split a column into two (given a delimiter character or string), and vice versa; optionally add virtual column(s) to preserve the original column(s)

* split off multiple columns to a separate table (e.g. if I have a “persons” table with some “address” fields which we want to split off into a separate “person addresses” table) – of course, this is just an extension on what you have already.

* “unpivot” – say I have several columns in a table that are the same thing – e.g. Person.Home_Number_1, Home_Number_2, Work_Number, Mobile_Phone_Number, Fax_Number; and I want to split these off into a separate table, which will have a “Phone_Number” column as well as a type column (‘Home’/’Work’/’Mobile’/’Fax’).

* “pivot” – opposite to the above, of course 🙂

* perhaps some transformations that act on an object type column – e.g. to remove the object type and replace with ordinary columns

Just ideas, not sure if all of these are necessarily going to be generally useful.

Morning Jeff,

looks interesting but I have a pit to nick with the generated code, this bit particularly:

if inserting and :new.ARTIST_ID is null then

which is part of the trigger to assign a new artist_id from the sequence. I personally do not like code that allows for subtle future bugs. The code should read:

if inserting then

As it exists, it’s allowing the user to supply a value for the artist_id and as long as that is above the highest value already supplied by the sequence, all is well, until the day that the sequence supplies that same value and the trigger blows up.

Basically, if you use a sequence to supply an ID, the user must not be allowed to ever supply one manually. And by user I also include the application(s) using the data of course.

Cheers,

Norm.

To be fair, I’d say that it doesn’t matter whether SQL Developer generates the code that way or the alternative, you’re going to have the potential for subtle bugs anyway – so they may as well aim for something that will be most generally useful.

If the trigger doesn’t check if ARTIST_ID is null, and the application were to get set ARTIST_ID from the sequence, the trigger would go ahead and overwrite it with a new value from the sequence; now, if the application uses the original id elsewhere (e.g. for saving into a child table), it will fail (e.g. with foreign key violations).

I’m not saying this is the right way to go, and I’m not necessarily disagreeing with the objection, but either way might be legitimate in certain circumstances – at the end of the day, anyone who blindly accepts the code generated by SQL Developer as is without understanding it, validating it and testing it, deserves what they get 🙂

I don’t trust any code that is written for me, not without verifying it first. I’m a super-proponent of transparency and I want to know what the tool is doing for me so I can approve or improve or even reject it outright.

So if we don’t call it normalize, what SHOULD we call it? I’m not sure that ‘refactor’ on a table object would really tell the user what to expect, whereas I think the ‘normalize’ wording is almost self-explanatory.

Jeff,

normalise/normalize is correct. It’s what you are doing. In my case, I’d expect it to allow me to “normanise” my tables. 😉

Cheers,

Norm.

You could in fact build your own ‘Normanize’ feature via the XML Extensions. If history has any say in the matter, it would quickly grow to take over the world 😉

Agreed, a menu option that merely says “refactor” is not good enough – in my head was a normalisation/refactoring submenu or popup dialog which allows the user to choose what modification to make to the table.

My main beef with the “normalise” label is that there are a number of different changes I might want to make to a table to make it “more normalised”. What SQL Developer does right now is just one example – perhaps in the future further options will be added that assist in refactoring/normalisation.

In other words, a developer who picks the “normalise” menu option would expect to result in a table that has been “normalised”, as if this feature is the only thing you need to use to make the table such. Whereas, a developer who picks a “refactor: create parent table from column” menu option would have a better idea of what they’re going to get from it. I think it’s more intuitively obvious that the outcome of refactoring is a new set of code; that might subsequently be the subject of further refactoring.

What other normalization activities would you like to see us add Jeff?

Excellent point – running the ‘Normalize’ feature won’t ensure your table is ‘normalized’, it just performs one of many possible normalization exercises.

How do you know the application hasn’t already confirmed the ID value is available?

Here’s the good news Norm – since we expose the SQL, the user is able to copy the -Is-it-refactoring-or-is-it-normalizing-script- and change it to suit their needs.

I do like your approach, all or nothing. Of course sequences can break on their own accord eventually anyway…

Jeff,

how exactly did the application determine that that value was available? Fine if it got the next value direct from the sequence, but I’ve seen multi-user systems just check the value from user/all/dba_sequences! Go figure! (Yes, of course the app owner schema “needed” dba privs to work!)

Also, exposing the SQL is a good thing in my opinion, it’s how I was able to “nick that pit”, but I wonder how many DBAs using the feature will have less of a programming background than I do, and will simply accept what is offered, without question?

Sequences break? Surely not! 😉 I’ve seen them break in test when a database refresh was done by dropping the tables, view etc, but forgetting to drop the sequences and importing with ignore=y. Oops!

My all or nothing approach works fine for me, I admit, other mileages may vary as we all know. However, it needn’t be the application that sets up a forthcoming failure, it could be a DBA/developer script that decides to shove in a new value. I’m just interested in protecting my data.

Still, the feature under discussion seems like a good one, so I’m not completely against it! 😉

Cheers,

Norm.

I don’t know Andrew, the first paragraph of this topic on Wikipedia seems to match up with this feature. Where do you think it falls short?

http://en.wikipedia.org/wiki/Database_normalization

I know there are more nits, so keep picking!

I agree with Andrew Wolfe, this is refactoring, not normalisation. To expand: SQL Developer appears to provide a tool that helps to refactor a physical data model in *one particular way* in order to deal with *one* particular symptom of denormalisation (3NF). In fact, the Relational Model doesn’t actually prescribe that you must have surrogate keys for all entities – you can have an “Country Name” right there in your Brewery relation and still satisfy 3NF – you just need a second relation “Countries” with a key on “Country Name”. It’s more for convenience (and to get around the limitations of the DBMS) that we use a surrogate key (e.g. “Country ID”) instead.

I suspect the opening paragraphs of the wikipedia article cited are not as precise as they could be (but probably simplified for general consumption).

Another nit: is this really normalizing? I think I might label this ‘refactoring’…

Well you found a nit in my hair, so I need to thank you!

Yes, very clear distinction here. I made a conscious decision to alter this table and my data model. The tool just did the heavy lifting for me.

Our tools won’t look at your data, cough, and prescribe medication. You get to play data doctor.

I’ll quote someone who I thought totally nailed this point –

‘I agree that a fool with a tool is still a fool, but I would argue that craftsman with the right tool can be an even better craftsman. In essence, the problem isn’t the tool,it is the person wielding the tool.’ – http://bornagainagilist.wordpress.com/2012/04/20/how-oracle-helped-to-make-my-data-model-agile/

I’m going to nitpick here, only because this is a pain point for me. It takes knowing the semantics (meaning) of a fact to know how to normalize a relation. So in this case you, a human, found a column that needed to be normalized. SQL Developer seems to do a wonderful thing in helping you implement that decision. But you did the normalizing.

I see too many people making arbitrary statements like “X number of joins is too many” (for what?) or “a table can only have 5 relationships” (what DBMS is that?). Database design requires a brain and we seem to be lacking in those in the data world….